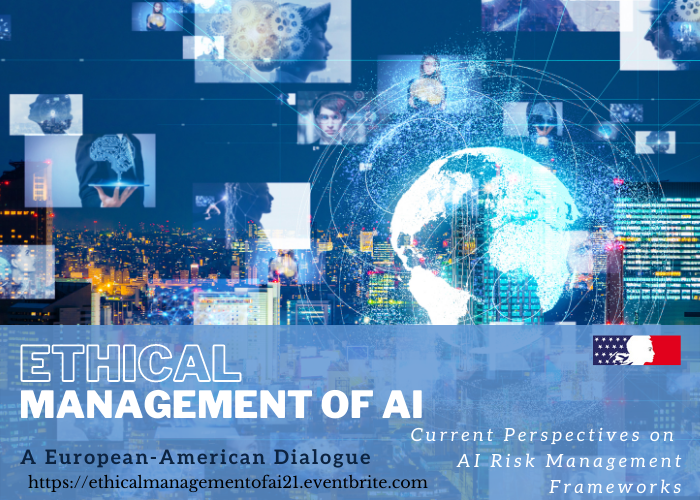

Ethical Management of AI: A European-American dialogue

Talk

Ethical Management of AI - France-Atlanta

November 2, 2021 | 8:45am

Current Perspectives on AI Risk Management Frameworks in the US and the EU.

In light of the 2020 France-Atlanta Conference, the French Consulate in Atlanta (USA), the Georgia Institute of Technology (USA), the Center for Ethics at Emory University (USA) and the University of Nantes (France), are organizing a workshop which, under the same title “The Ethical Management of AI”, suggests looking at the different regulatory initiatives undertook by the European Union and by the United States of America, to deal with the ethical risks raised by Artificial Intelligence (AI).

Should certain AI activities, uses or goals, be prohibited? or should AI be open to any field? Should AI technologies be classified according to the risks they involve? If so, what risks should be considered? Should we set up a pattern to define the level of AI risk? From then, how to proceed to mitigate the risks? Would it be effective to tackle the ethical challenges?

In Spring 2021, the European Commission introduced a Bill, “Proposal for a regulation laying down harmonized rules on artificial intelligence” which encloses a chapter on prohibited practices. If adopted, the proposal would implement an AI-risk management system, where AI practices would be assessed by their level of risks and comply with different obligations accordingly. In the meantime, amongst a multitude of bills introduced, the US Congress has adopted two Acts, first the “AI Government Act 2020” , and second “The National Artificial Intelligence Act 2020” . However, under a direction from the Congress, the NIST is committed to define a “consensus-driven”, “voluntary” and “guidance document” also based on a risk management system AI Risk Management Framework (see ai.gov).

Therefore, the workshop suggests having a general presentation and discussing those European and American approaches on public, private and broad perspectives, the current criteria set for defining AI levels of risk, the tools to assess them and the involved obligations, and studying how these patterns could, if adopted, “capture” the ethical management of AI and deal effectively with AI ethics.

The workshop will be hosted virtually on Tuesday, November 2nd 2021, with the hours set up to accommodate the different time zones in Europe and in the US. It will be divided in two main sessions, one dedicated to the evaluation of AI risks through its definition and the tools currently available, and the other focused on ways these regulatory frameworks are able to meet the AI ethical challenges.

Read more and register